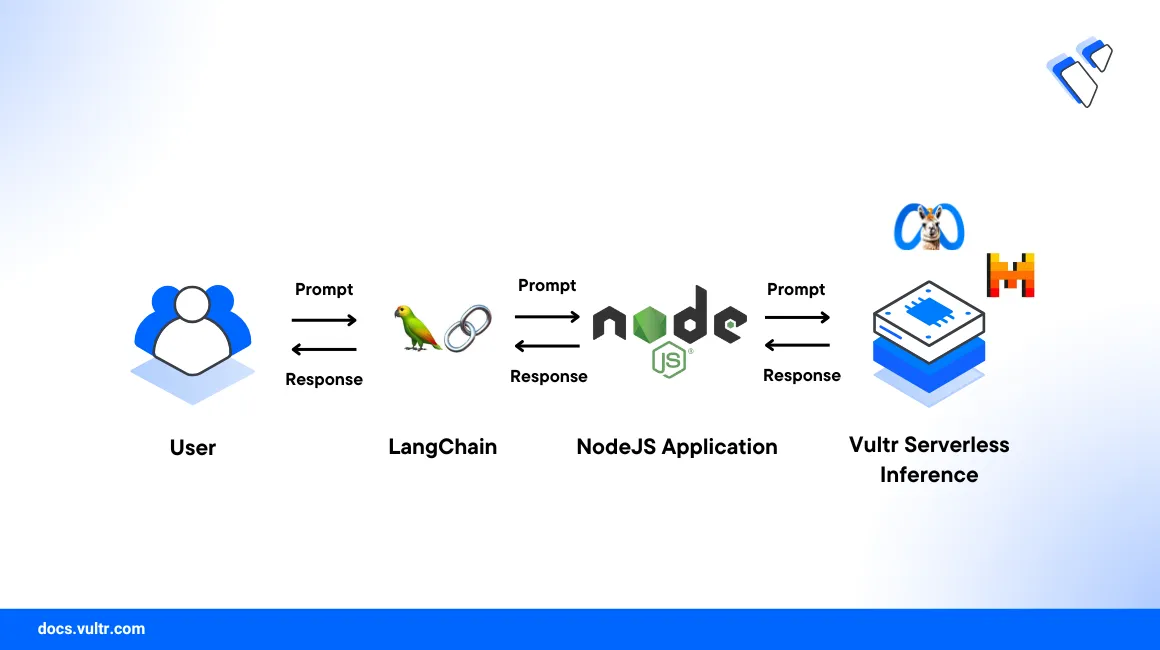

How to Use Vultr Serverless Inference in Node.js with Langchain

Vultr Serverless Inference allows you to run inference workloads for large language models such as Mixtral 8x7B, Mistral 7B, Meta Llama 2 70B, and more. Using Vultr Serverless Inference, you can run inference workloads without having to worry about the infrastructure, and you only pay for the input and output tokens.

This article demonstrates step-by-step process to start using Vultr Serverless Inference in Node.js with Langchain.

Prerequisites

Before you begin, you must:

- Create a Vultr Serverless Inference Subscription

- Fetch the API key for Vultr Serverless Inference

- Node.js 20.x or later

Set Up the Environment

Create a new project directory and navigate to the project directory.

console$ mkdir vultr-serverless-inference-nodejs-langchain $ cd vultr-serverless-inference-nodejs-langchain

.Create a new Node.js project.

console$ npm init -y

Install the required Node.js packages.

console$ npm install @langchain/openai

Inference via Langchain

Langchain provides a Node.js SDK to run inference workloads for Vultr Serverless Inference. You can use the @langchain/openai package to make the API calls.

Create a new JavaScript file name

inference-langchain.js.console$ nano inference-langchain.js

Add the following code to

inference-langchain.js.javascriptconst { ChatOpenAI } = require('@langchain/openai'); const { HumanMessage, SystemMessage } = require('@langchain/core/messages'); const apiKey = process.env.VULTR_SERVERLESS_INFERENCE_API_KEY; // Set the model // List of available models: https://api.vultrinference.com/v1/chat/models const model = ''; const messages = [ new HumanMessage('What is the capital of India?'), ]; async function main() { const client = new ChatOpenAI({ openAIApiKey: apiKey, modelName: model, configuration: { baseURL: 'https://api.vultrinference.com/v1', } }); const llmRsponse = await client.invoke(messages); console.log(llmRsponse.content); } main();

Run the

inference-langchain.jsfile.console$ export VULTR_SERVERLESS_INFERENCE_API_KEY=<your_api_key> $ node inference-langchain.js

Here, the

inference-langchain.jsfile uses the@langchain/openaipackage to run inference workloads for Vultr Serverless Inference. Langchain uses Langchain Expression Language (LCEL) for defining different types of messages such asHumanMessageandSystemMessage. For more information, refer to the Langchain documentation.

Conclusion

In this article, you learned how to use Vultr Serverless Inference in Node.js with Langchain. You can now integrate Vultr Serverless Inference into your Node.js applications that uses Langchain to generate completions for large language models.