Qwen3-VL (235B) Stress Testing

Updated on 11 March, 2026Comprehensive stress testing of Qwen3-VL-235B-A22B-Instruct (Vision-Language model, 235B parameters, 22B active) on 8x AMD Instinct MI325X GPUs.

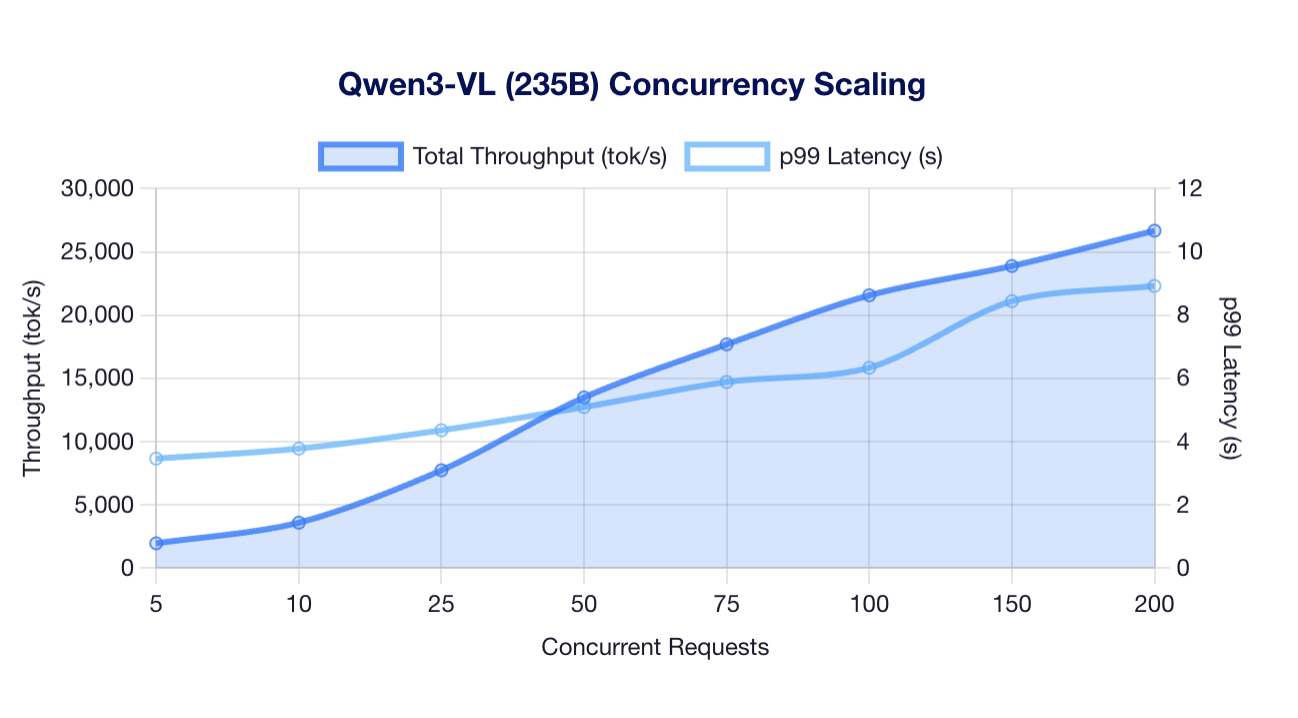

Concurrency Scaling

Scaling Results

| Concurrency | Throughput | Output tok/s | p99 Latency |

|---|---|---|---|

| 5 | 1,956 tok/s | 292 | 3.46s |

| 10 | 3,586 tok/s | 535 | 3.78s |

| 25 | 7,728 tok/s | 1,153 | 4.36s |

| 50 | 13,489 tok/s | 2,012 | 5.09s |

| 75 | 17,685 tok/s | 2,638 | 5.88s |

| 100 | 21,567 tok/s | 3,217 | 6.33s |

| 150 | 23,882 tok/s | 3,562 | 8.44s |

| 200 | 26,674 tok/s | 3,978 | 8.92s |

Observations:

- Near-linear scaling up to 200 concurrent requests

- Throughput increases 13.6x from 5 to 200 concurrent

- p99 latency increases proportionally (2.6x) - acceptable trade-off

- MoE architecture (22B active) enables efficient batching

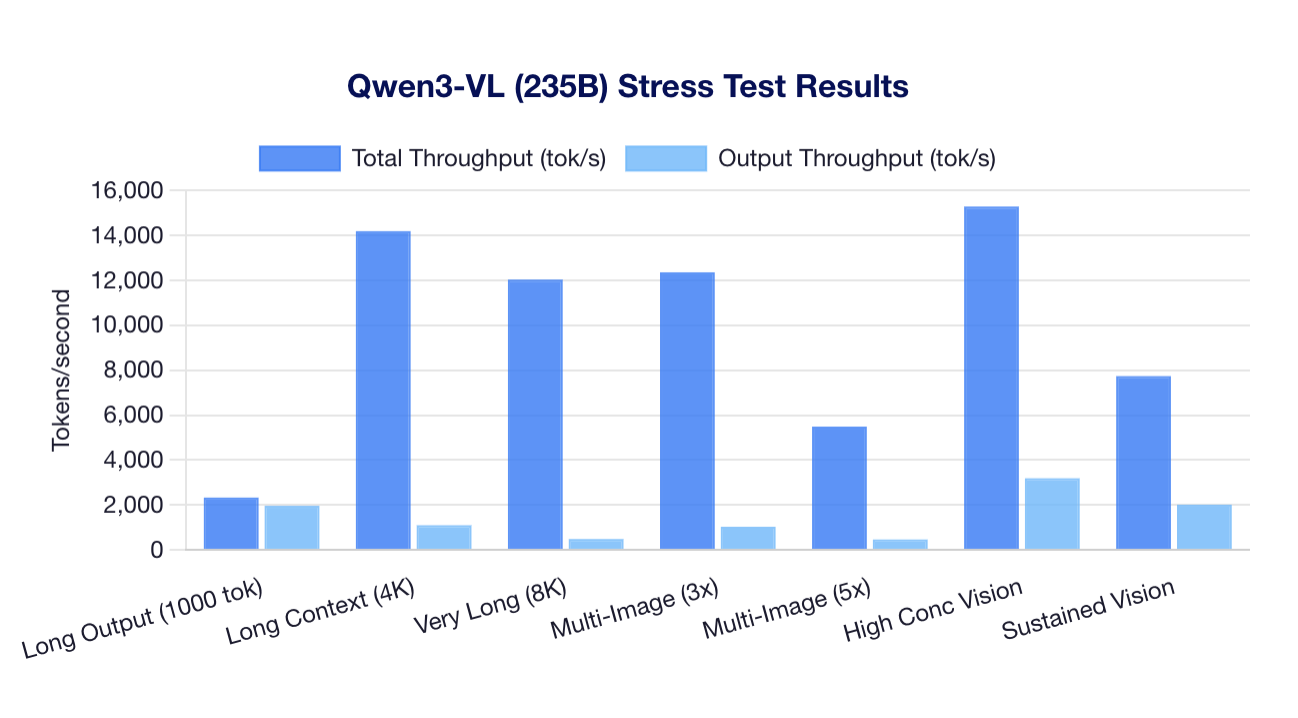

Stress Tests

Stress Test Results

| Test Type | Concurrency | Throughput | Output tok/s | p99 Latency |

|---|---|---|---|---|

| Long Output (1000 tokens) text | 50 | 2,324 tok/s | 1,959 | 11.78s |

| Long Context (4K) text | 25 | 14,193 tok/s | 1,091 | 4.22s |

| Very Long Context (8K) text | 12 | 12,037 tok/s | 478 | 4.00s |

| Multi-Image (3 per req) multi-image | 25 | 12,360 tok/s | 1,024 | 6.82s |

| Multi-Image (5 per req) multi-image | 12 | 5,486 tok/s | 457 | 10.65s |

| High Concurrency Vision vision | 100 | 15,290 tok/s | 3,183 | 9.62s |

| Sustained Vision vision | 50 | 7,734 tok/s | 2,008 | 10.05s |

Key findings:

- Long context prefill fast: 14,193 tok/s with 4K context

- Multi-image processing works: 3-5 images per request handled efficiently

- Long output generation: 1,959 tok/s output throughput

- All tests passed with 100% success rate

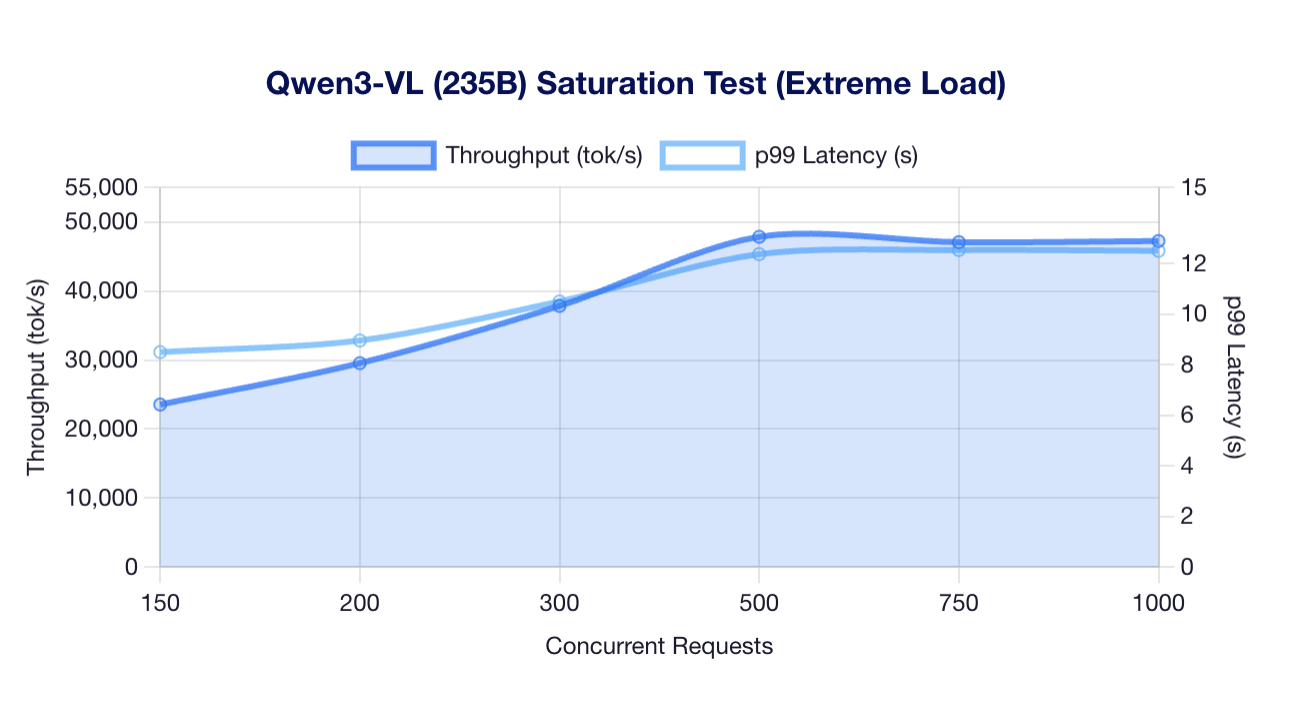

Saturation Testing

Extreme Load Results

| Concurrency | Throughput | Output tok/s | p99 Latency | Status |

|---|---|---|---|---|

| 150 | 23,557 tok/s | 3,513 | 8.50s | OK |

| 200 | 29,557 tok/s | 4,408 | 8.96s | OK |

| 300 | 37,850 tok/s | 5,645 | 10.50s | OK |

| 500 | 47,873 tok/s | 7,140 | 12.37s | PEAK |

| 750 | 47,092 tok/s | 7,023 | 12.53s | SATURATED |

| 1000 | 47,252 tok/s | 7,047 | 12.50s | SATURATED |

Observations:

- Peak throughput of 47,873 tok/s achieved at 500 concurrent

- Saturation begins at 750 concurrent (throughput plateau)

- 100% success rate maintained even under extreme load

- System remains stable at 1,000 concurrent requests

Recommendations

| Use Case | Concurrency | Expected Throughput |

|---|---|---|

| Low latency | 5–10 | 2,000–3,600 tok/s |

| Balanced | 25–50 | 7,700–13,500 tok/s |

| High throughput | 100–200 | 21,500–26,700 tok/s |

| Maximum throughput | 500 | 47,873 tok/s |

Test Configuration

| Parameter | Value |

|---|---|

| Model | Qwen/Qwen3-VL-235B-A22B-Instruct |

| Precision | BF16 |

| Tensor Parallelism | 8 |

| GPUs | 8x MI325X (256GB each) |

| Total VRAM | 2 TB |

| Test Mode | Thorough (3x multiplier) |

Launch Command

bash

docker run --rm \

--group-add=video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--device /dev/kfd \

--device /dev/dri \

-v ~/.cache/huggingface:/root/.cache/huggingface \

--env "HF_TOKEN=$HF_TOKEN" \

--env "VLLM_USE_TRITON_FLASH_ATTN=0" \

-p 8000:8000 \

--ipc=host \

vllm/vllm-openai-rocm:latest \

--model Qwen/Qwen3-VL-235B-A22B-Instruct \

--tensor-parallel-size 8 \

--max-model-len 32768 \

--kv-offloading-backend native \

--kv-offloading-size 64 \

--disable-hybrid-kv-cache-manager

Test Environment

| Specification | Value |

|---|---|

| GPU | 8x AMD Instinct MI325X |

| VRAM | 256 GB HBM3E per GPU (2 TB total) |

| Architecture | CDNA 3 (gfx942) |

| ROCm | 6.4.2-120 |

| vLLM | 0.14.1 |