Introduction

Human face detection is a computer vision technology that identifies/locates human faces in digital images, or videos. It is a specific use case of object-class detection technology. Object-class detection technology detects instances (locations and sizes) of all objects in an image or video that belong to a given class such as human faces, cars or buildings.

Human face detection has uses in several fields including:

- Photography - recent smartphones and digital cameras use human face detection for autofocus capabilities.

- Facial recognition - human face detection has use in biometrics as a component of facial recognition systems such as face unlock/id in smartphones and video surveillance.

- Marketing - targeted ads towards a subgroup of people after detecting any face that walks past.

- Emotional inference - emotional inference is the process of recognizing human emotion.

- Security - provides surveillance and tracking of people in real-time.

OpenCV (Open Source Computer Vision Library) is an open-source computer vision and machine learning software library. It is a library of programming functionality aimed at real-time computer vision. Computer vision is a field of Artificial Intelligence (AI) that enables computerized systems to derive meaningful information from digital visual inputs such as images and videos, and give recommendations or execute actions based on that information.

OpenCV speeds up the use of machine perception in commercial products and also offers a common infrastructure for computer vision applications. OpenCV includes a comprehensive set of both classic and ultramodern computer vision and machine learning algorithms with support for over 2500 optimized algorithms. The algorithms have found use in facial detection and recognition, object identification, tracking moving objects, overlaying scenery with augmented reality, and others.

OpenCV’s primary interface is in C++, but has wrappers in languages like Python and Javascript.

This guide uses OpenCV-Python which is the Python API binding for OpenCV.

Prerequisites

- Working knowledge of Python.

- Properly installed and configured python toolchain including pip (Python version >= 3.3).

How Face Detection Works

Human face detection works using algorithms called classifiers to find human faces within a given image. These classifiers are essentially models trained on many images - with or without faces. These models usually start by scanning for human eyes which is one of the easiest features to detect, then might try to detect other features like the iris, eyebrows, mouth, or nose. Once it detects a facial region, additional tests are usually carried out to verify if the region is a face. The number of images used to train the model affects the accuracy, as training the model on 1000 images would be less accurate than 100,000 images.

Human face detection algorithms typically belong to a hybrid or one of four categories:

- Knowledge-based Face Detection: Also known as rule-based face detection, this method relies on a set of rules developed by humans according to common knowledge. Human faces have a set of eyes, a nose, and a mouth all within certain positions and distances from each other, this forms the basis of developing a set of rules. The drawback of this method is building an appropriate rule set, as if the rules are too generalized or detailed - the system yields many false positives. Another major drawback of this method is that it does not work well for all skin color variations due to different lighting conditions.

- Feature-based Face Detection: This method extracts structural features of the face. Trained as a classifier and used to differentiate facial from non-facial regions, and then used to detect a face. Noise and light can sometimes negatively affect this method.

- Appearance-based Face Detection: This method employs machine learning and statistical analysis to find the relevant caracteristics of face images and extract features from the images. This method consists of several algorithms.

- Template matching: This method detects faces by finding a correlation between predefined or parameterized face templates and the input image.

To improve human face detection, certain techniques are often used including:

- Removing the image background to help reveal face boundaries.

- Converting images to grayscale helps separate the luminance plane from the chrominance plane. The luminance plane is more important for distinguishing visual features in an image. Processing is also much faster in grayscale compared to color, because grayscale images have only one color channel compared to 3 channels in a colored image (RBG, HSV).

Choosing a Classifier

OpenCV has two pre-trained classifiers that can be readily used:

- Haar classifier

- Local Binary Pattern (LBP) classifier

This guide uses the Haar classifier to build a human face detection application.

Haar Classifier

Haar is a feature-based cascade classifier that uses a machine learning approach for object detection and is capable of processing images quickly with high detection rates. Cascade classifiers make use of several classifiers and use the output from a previous classification as additional information for the next classifier.

Haar cascade classifier works by detecting Haar-like features, which are digital image features used in object recognition.

Setting Up The Project Virtual Environment

To create an isolated virtual environment for your application:

Install the

virtualenvpython package:$ pip install virtualenvCreate the project directory:

$ mkdir face_detectorNavigate into the new directory:

$ cd face_detectorCreate the virtual environment:

$ python3 -m venv envThis creates a new folder named

envcontaining scripts to control the virtual environment, including program libraries.Activate the virtual environment:

$ source env/bin/activate

Installing OpenCV

To install OpenCV in python:

$ pip install opencv-pythonThis installs the main OpenCV modules needed for this application.

Building Face Detection For Images

To build the face detection application, create a main.py file within the virtual environment:

$ touch main.pyOpen the file in your text editor.

Import OpenCV

Import OpenCV by adding the following line:

import cv2Load Image

This guide uses the image below:

Download the image, save as group.jpg and place it within the project directory. The project directory should look like this:

.

├── env/

└── main.py

└── group.jpgAdd the following line to main.py to load the image:

## Load image

test_image = cv2.imread('group.jpg')The imread function takes two parameters, a filename that corresponds to the image to load and an optional parameter - flag. flag specifies the mode to read the file in and can take 3 types of values namely:

cv2.IMREAD_COLORor1: specifies that the image should be first converted to a 3-channel BGR color image with no transparency channel and then loaded into the program.cv2.IMREAD_GRAYSCALEor0: the image is first converted to a single channel grayscale image and then loaded into the program.cv2.IMREAD_UNCHANGEDor-1: specifies loading the image as it is.

Omitting the flag parameter defaults the mode to cv.IMREAD_COLOR.

Convert Image To Grayscale

Convert the image to grayscale before applying the face detection algorithm to yield faster and more accurate results.

Note: Using the following would have loaded the image in grayscale earlier:

test_image = cv2.imread('group.jpg', cv2.IMREAD_GRAYSCALE)But loading it in grayscale would have left no way to get the new face-detected image in color.

To convert the image to grayscale, add the following line:

# Convert to grayscale

test_image_gray = cv2.cvtColor(test_image, cv2.COLOR_BGR2GRAY)The cvtColor function converts images between different color spaces. It takes two arguments - the source image, and the color space conversion code. Passing cv2.COLOR_BGR2GRAY as the color space conversion code specifies a conversion from color to grayscale. This function returns the converted grayscale image.

Initialize the Cascade Classifier

As mentioned earlier, this guide uses the pre-trained Haar cascade classifier that comes bundled with OpenCV for human face detection. There are many classifiers included for detecting eyes, smile, lower body, full body, and other features. These classifiers are in the form of XML files and located in the opencv/data/haarcascades folder.

The classifier used in this guide is the haarcascade_frontalface_default.xml for detecting faces. To initialize the face detector, add the following lines:

# Initialize the face detector

face_classifier = cv2.data.haarcascades + 'haarcascade_frontalface_default.xml'

face_cascade = cv2.CascadeClassifier(face_classifier)cv2.data.haarcascades returns the directory path containing the Haar cascade classifier files, appending haarcascade_frontalface_default.xml to it - specifies the face classifier to use.

The CascadeClassifier function loads and initializes the classifier from the file passed as an argument.

Detect Faces

To detect faces, add the following lines:

# Detect the faces in the image

faces = face_cascade.detectMultiScale(test_image_gray)

# Print number of faces detected

print(f"{len(faces)} faces detected in the image")Calling the detectMultiScale method on the initialized cascade classifier detects faces in the image passed as an argument. This method will return rectangles with coordinates (x, y, width, height) around the detected faces. detectMultiScale also accepts optional arguments:

- scaleFactor - specifies the image size reduction at each image scale. This option offers compensation for faces that may be farther away from the camera in group photos. This option defaults to 1.1; increasing it to a higher value like 1.4 will result in faster detection, but with the risk of missing some faces altogether.

- minNeighbors - specifies how many neighbors each candidate rectangle should have to preserve it. This option defaults to 3. This affects the quality of detected faces, with higher values resulting in fewer detections but higher quality. The recommendation is a value from 3 to 6.

- flags - has the same meaning for an old cascade as in the function cvHaarDetectObjects. It is not used for a new cascade. This option defaults to 0.

- minSize - minimum possible object size. Ignore objects smaller than this. This option defaults to (0, 0).

- maxSize - maximum possible object size. Ignore objects larger than this. This option defaults to (0, 0). If maxSize == minSize, evaluate the model on a single scale.

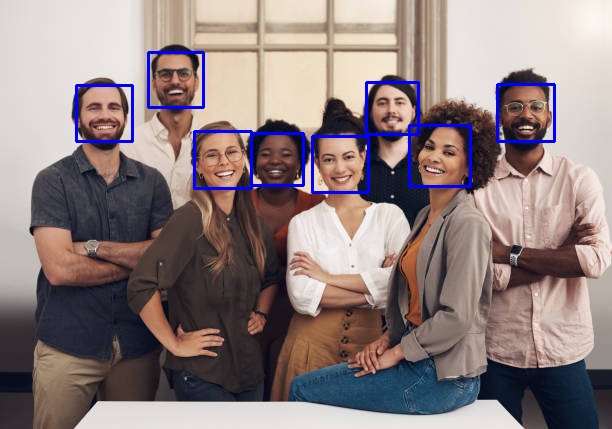

Drawing Over Detected Faces

To visualize the face detection in the image, draw rectangles over the faces by adding the following lines:

# Draw a blue rectangle over every face

for x, y, width, height in faces:

cv2.rectangle(test_image, (x, y), (x + width, y + height), color=(255,0, 0), thickness=2)The rectangle function draws a rectangle over an image. It takes 5 parameters:

- Image to draw a rectangle over.

- Starting coordinates of the rectangle, represented as a tuple of two values (x, y).

- Ending coordinates of the rectangle, also represented by a tuple of two values (x, y).

- The color of the rectangle border line to draw in BGR is represented as a tuple.

- The thickness of the rectangle border line. It is an optional value.

The code above draws a rectangle with border line thickness 2 with the color blue (BGR - (255, 0, 0)) over all the faces detected by the Haar cascade classifier.

Saving The Image

To save the image with the rectangles drawn over the faces, add the following line:

# Save the new image with rectangles drawn

cv2.imwrite("group_detected.jpg", test_image)Running The Code

For reference, the code:

import cv2

# Load image

test_image = cv2.imread('group.jpg')

# Convert to grayscale

test_image_gray = cv2.cvtColor(test_image, cv2.COLOR_BGR2GRAY)

# Initialize the face detector

face_classifier = cv2.data.haarcascades + 'haarcascade_frontalface_default.xml'

face_cascade = cv2.CascadeClassifier(face_classifier)

# Detect the faces in the image

faces = face_cascade.detectMultiScale(test_image_gray)

# Print number of faces detected

print(f"{len(faces)} faces detected in the image")

# Draw a blue rectangle over every face

for x, y, width, height in faces:

cv2.rectangle(test_image, (x, y), (x + width, y + height), color=(255,0, 0), thickness=2)

# Save the new image with rectangles drawn

cv2.imwrite("group_detected.jpg", test_image)Running the code returns the prompt:

8 faces detected in the imageThe Haar cascade classifier detected eight faces in the image. Running the code would create a new copy of the image with the detected faces surrounded by a blue rectangle within the directory:

The program successfully identified all eight faces in the image.

Note: Not all the faces in an image may be accurately detected due to factors like varying lighting conditions, and the presence of glasses, among others.

Adding Face Detection Through WebCam

Another common task is to add human face detection through a webcam. Face detection through webcams or external cameras requires reading video input and processing each frame as frames/images make up videos.

Program Code

Create a new video.py file within the project environment and the following lines:

import cv2

# Initialize the face detector

face_classifier = cv2.data.haarcascades + 'haarcascade_frontalface_default.xml'

face_cascade = cv2.CascadeClassifier(face_classifier)

# create a new cam object

cap = cv2.VideoCapture(0)

if not cap.isOpened():

print(Cannot open camera”)

exit()

while True:

# Read the frame from the cam

_, frame = cap.read()

# Convert to grayscale

frame_gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

# Detect all the faces in the frame

faces = face_cascade.detectMultiScale(frame_gray)

# Draw a blue rectangle over every face

for x, y, width, height in faces:

cv2.rectangle(frame, (x, y), (x + width, y + height), color=(255, 0, 0), thickness=2)

cv2.imshow("frame", frame)

if cv2.waitKey(0):

break

cap.release()

cv2.destroyAllWindows()Calling the VideoCapture function creates a video capture object which would help stream or display the video. It takes a video source as an argument but passing it a value of 0 uses the in-built webcam as a video source if the system has one. If the system has more than one camera connected, then the device index for each camera increments, like 1, 2, or 3.

Sometimes, an error might occur when trying to open the camera. The isOpened method returns a true or false value depending on if the VideoCapture stream was successfully opened.

Next, create a continuous, infinite frame-by-frame loop. The read method grabs the current frame/image and returns two values - a boolean returning True if it grabs a frame and the frame itself. The classifier runs through a converted grayscale version of the frame and draws blue rectangles over every detected face in the frame.

The imshow function displays an image in a window. It takes two arguments, a string representing the window's name to display the image and the image to display. Calling imshow displays the newly drawn frame in a window named 'frame'.

if cv2.waitKey(0):

breakThe above line detects a key press and exits the loop if found.

Release the capture stream using the release method, and close all windows before the program execution ends using the destroyAllWindows function.

Running video.py spawns a webcam window if it finds the system webcam and detects faces in the video stream.

Conclusion

This guide covered how to build human face detection with OpenCV in Python. For more information, check the OpenCV official website.

No comments yet.