DeepSeek V3.2 (685B) Validation Testing

Updated on 11 March, 2026Validation benchmarks for DeepSeek V3.2 (685B parameters) on 8x AMD Instinct MI325X GPUs.

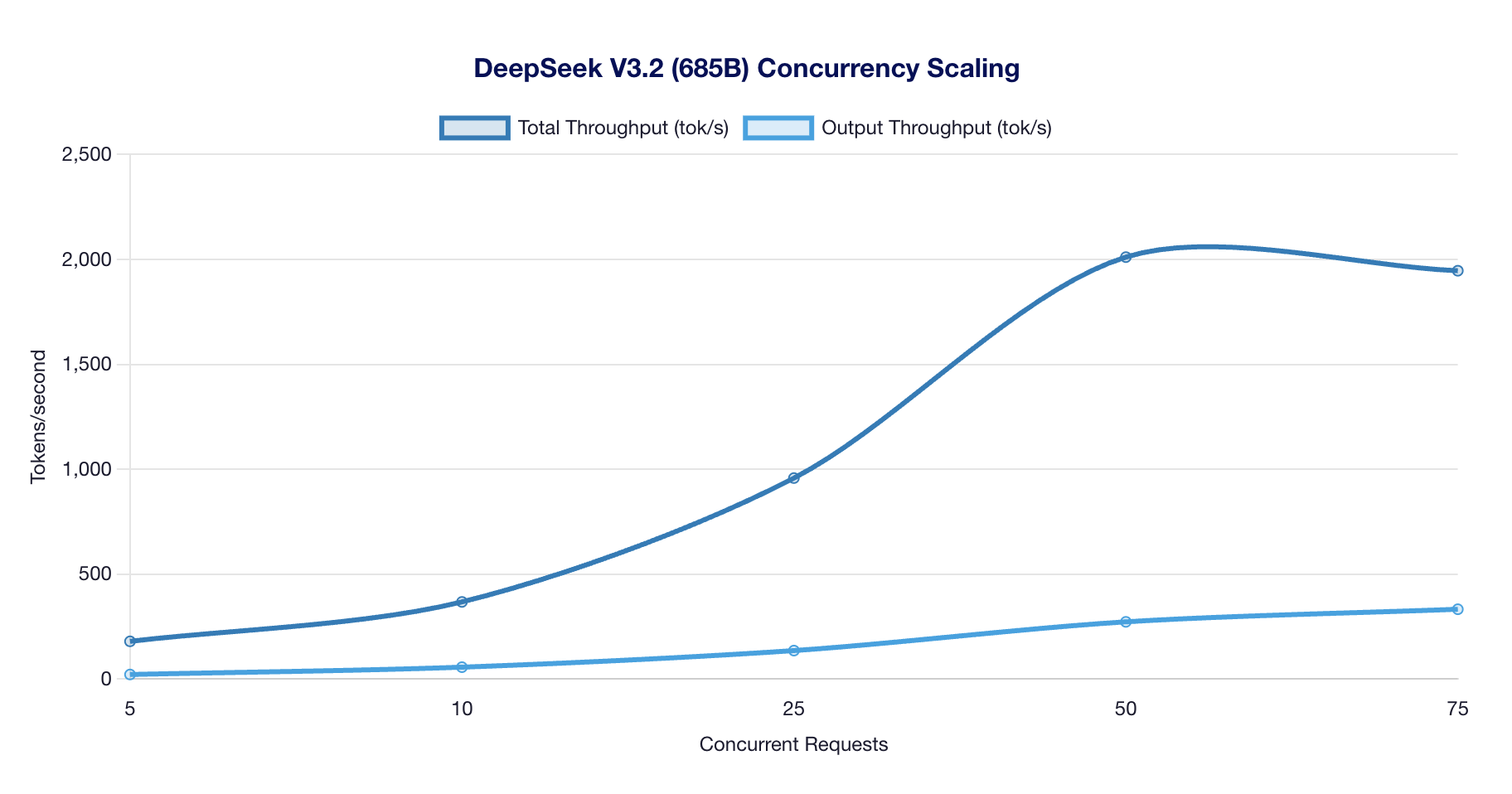

Concurrency Scaling

Scaling Results

| Concurrent | Throughput | Output tok/s | p99 Latency | Status |

|---|---|---|---|---|

| 5 | 180 tok/s | 22 | 8.93s | OK |

| 10 | 368 tok/s | 57 | 9.43s | OK |

| 25 | 958 tok/s | 136 | 8.94s | OK |

| 50 | 2,011 tok/s | 273 | 8.44s | OK |

| 75 | 1,946 tok/s | 333 | 9.10s | SATURATED |

Observations:

- Strong scaling from 5 to 50 concurrent requests

- Peak scaling throughput of 2,011 tok/s at 50 concurrent

- Saturation detected at 75 concurrent (throughput plateau)

- MLA architecture shows efficient batching up to saturation point

Stress Tests

| Test | Throughput | Output tok/s | p99 Latency | Status |

|---|---|---|---|---|

| Long Output (500 tokens) | 288 tok/s | 227 | 20.55s | OK |

| Long Context (4K) | 1,801 tok/s | 63 | 9.64s | OK |

Key findings:

- Long output generation (500 tokens): 288 tok/s with 20.5s p99 latency

- Long context (4K tokens): 1,801 tok/s with 9.6s p99 latency

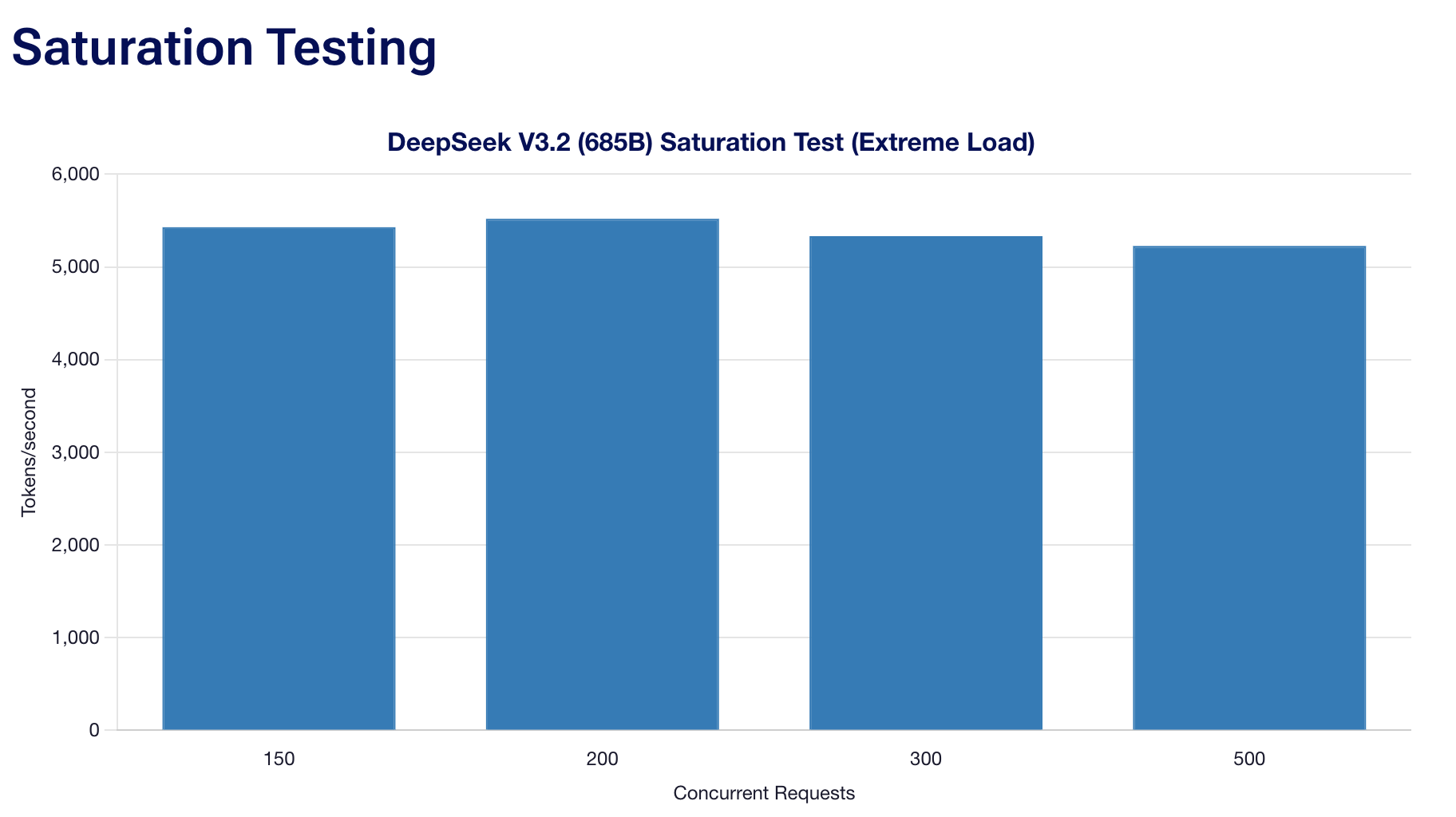

Saturation Testing

Extreme Load Results

| Concurrent | Throughput | Success Rate | p99 Latency | Status |

|---|---|---|---|---|

| 150 | 5,428 tok/s | 100% | 4.36s | OK |

| 200 | 5,519 tok/s | 100% | 4.39s | SATURATED |

| 300 | 5,332 tok/s | 100% | 4.64s | SATURATED |

| 500 | 5,226 tok/s | 100% | 4.53s | SATURATED |

Observations:

- Peak throughput of 5,519 tok/s achieved at 200 concurrent

- Saturation begins at 200 concurrent requests

- 100% success rate maintained even under extreme load

- Consistent ~5,200-5,500 tok/s across 150-500 concurrent

Recommendations

| Use Case | Concurrency | Expected Throughput |

|---|---|---|

| Low latency | 5–10 | 180–370 tok/s |

| Balanced | 25–50 | 960–2,000 tok/s |

| High throughput | 150–200 | 5,400–5,500 tok/s |

Test Configuration

| Parameter | Value |

|---|---|

| Model | deepseek-ai/DeepSeek-V3.2 |

| Test Mode | quick |

| Timestamp | 20260128_192726 |

| Vision Model | No |

Launch Command

bash

docker run --rm \

--group-add=video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--device /dev/kfd \

--device /dev/dri \

-v ~/.cache/huggingface:/root/.cache/huggingface \

--env "HF_TOKEN=$HF_TOKEN" \

--env "VLLM_ROCM_USE_AITER=1" \

--env "AITER_ENABLE_VSKIP=0" \

-p 8000:8000 \

--ipc=host \

vllm/vllm-openai-rocm:latest \

--model deepseek-ai/DeepSeek-V3.2 \

--tensor-parallel-size 8 \

--block-size 1 \

--quantization fp8

Test Environment

| Specification | Value |

|---|---|

| GPU | 8x AMD Instinct MI325X |

| VRAM | 256 GB HBM3E per GPU (2 TB total) |

| Architecture | CDNA 3 (gfx942) |

| ROCm | 6.4.2-120 |

| vLLM | 0.14.1 |