How to Migrate AWS ECS Services to Vultr Kubernetes Engine

Amazon Elastic Container Service (ECS) is a proprietary container orchestration platform that manages Docker containers using AWS-specific task definitions, services, and integrations. While ECS provides deep integration with AWS services, it creates vendor lock-in and limits portability. Kubernetes offers a standardized, cloud-agnostic approach to container orchestration with broader ecosystem support and platform independence.

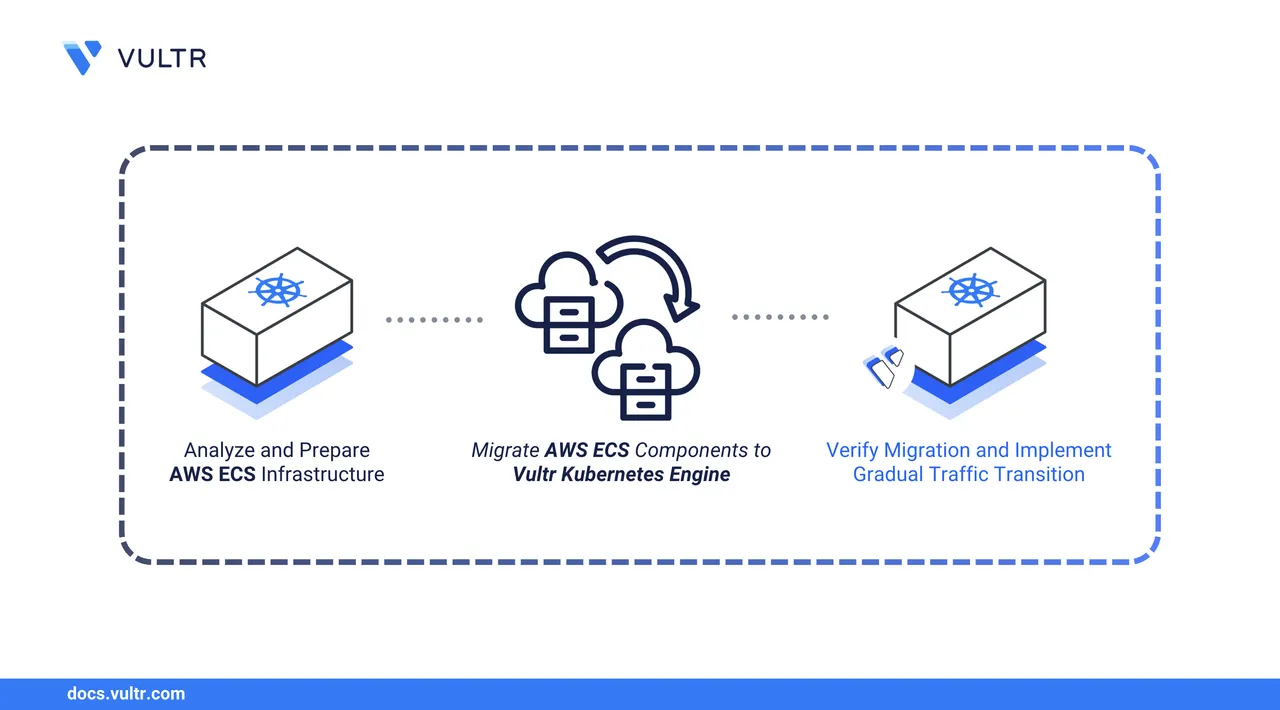

This guide outlines the migration of containerized applications from AWS ECS to Vultr Kubernetes Engine (VKE). It covers the analysis of existing ECS task definitions and services, conversion to Kubernetes manifests, replication of networking and service discovery patterns, migration of secrets and configuration, and deployment strategies that minimize downtime.

Prerequisites

Before you begin, you need to:

- Have a running AWS ECS cluster with services to migrate.

- Have access to your ECS task definitions, service configurations, and environment variables.

- Deploy a Vultr Kubernetes Engine cluster with sufficient capacity for your workloads.

- Install and configure kubectl on your workstation to manage your VKE cluster.

- Install Helm for deploying Kubernetes applications.

- Have access to the AWS CLI configured with appropriate credentials.

Analyze Your ECS Infrastructure

Before migrating, document your existing ECS configuration to understand the components that require conversion. This analysis identifies task definitions, services, load balancers, service discovery configurations, and dependencies.

List all ECS clusters in your AWS account.

console$ aws ecs list-clusters

The output displays cluster ARNs for all ECS clusters in your account.

List services running in your target cluster. Replace

CLUSTER-NAMEwith your actual cluster name.console$ aws ecs list-services --cluster CLUSTER-NAME

Describe each service to understand its configuration. Replace

SERVICE-NAMEandCLUSTER-NAMEwith your actual values.console$ aws ecs describe-services --cluster CLUSTER-NAME --services SERVICE-NAME > service-config.json

This command exports the service configuration to a JSON file for reference during migration.

Extract the task definition name from the service configuration.

console$ cat service-config.json | jq -r '.services[0].taskDefinition'

The output displays the task definition ARN. Note the task definition family name and revision number for the next step.

Retrieve the task definition details. Replace

TASK-DEFINITION-ARNwith the ARN from the previous step.console$ aws ecs describe-task-definition --task-definition TASK-DEFINITION-ARN > task-definition.json

Extract key configuration details from the task definition.

console$ cat task-definition.json | jq '.taskDefinition | {family, cpu, memory, networkMode, containerDefinitions: [.containerDefinitions[] | {name, image, cpu, memory, portMappings, environment, secrets, mountPoints}]}'

The output displays container images, resource allocations, port mappings, environment variables, secrets, and volume mounts. Save this information for converting to Kubernetes manifests.

Document the service dependencies by examining the service configuration file.

console$ cat service-config.json | jq '.services[0] | {loadBalancers, serviceRegistries, networkConfiguration}'

The output shows load balancer attachments, service discovery registrations, and networking settings. Note these configurations for replication in Kubernetes.

ECSNoteawsvpcmode maps each task to an ENI. Kubernetes uses a flat pod network model. If your ECS services relied on Security Groups for east-west isolation, replicate this behavior using Kubernetes NetworkPolicies.

Create a Kubernetes Namespace

Create a dedicated namespace for your application to isolate resources and simplify management. This provides logical separation from other applications and enables namespace-level resource quotas and access controls.

Create a namespace for your application.

console$ kubectl create namespace my-app

Replace

my-appwith a descriptive name for your application environment.Verify that the namespace is created.

console$ kubectl get namespace my-app

Set the namespace as the default context to avoid specifying

-n my-appwith every command.console$ kubectl config set-context --current --namespace=my-app

All subsequent kubectl commands in this guide will deploy resources to this namespace. To deploy to a different namespace, either change the context or add the -n namespace-name flag to each command.

Convert ECS Task Definitions to Kubernetes Deployments

ECS task definitions define container images, resource limits, environment variables, and volume mounts. Kubernetes Deployments serve the same purpose using a different YAML structure. Convert your task definitions to Kubernetes manifests by mapping ECS parameters to their Kubernetes equivalents.

The following table maps common ECS task definition parameters to their Kubernetes equivalents.

| ECS Parameter | Kubernetes Equivalent | Notes |

|---|---|---|

desiredCount |

spec.replicas |

Number of running tasks or pods |

image |

spec.containers[].image |

Container image reference |

containerPort |

spec.containers[].ports[].containerPort |

Port exposed by the container |

cpu (units) |

resources.requests.cpu |

1024 ECS units = 1 vCPU = 1000 millicores |

memory (MiB) |

resources.requests.memory |

Direct conversion to Mi or Gi |

environment |

env |

Static environment variables |

secrets |

env[].valueFrom.secretKeyRef |

References Kubernetes Secrets |

healthCheck |

livenessProbe, readinessProbe |

Container health monitoring |

mountPoints |

volumeMounts |

Volume mounting configuration |

volumes |

volumes |

Volume definitions |

Create a directory for Kubernetes manifests.

console$ mkdir k8s-manifests $ cd k8s-manifests

Create a Deployment manifest file. Replace

my-appwith your application name.console$ nano my-app-deployment.yaml

Add the following Deployment configuration. Adjust values based on your ECS task definition.

yamlapiVersion: apps/v1 kind: Deployment metadata: name: my-app labels: app: my-app spec: replicas: 3 selector: matchLabels: app: my-app template: metadata: labels: app: my-app spec: containers: - name: my-app image: your-registry/my-app:latest ports: - name: http containerPort: 80 protocol: TCP env: - name: APP_ENV value: "production" - name: LOG_LEVEL value: "INFO" resources: requests: cpu: "250m" memory: "512Mi" limits: cpu: "500m" memory: "1Gi" livenessProbe: httpGet: path: /health port: http initialDelaySeconds: 30 periodSeconds: 10 timeoutSeconds: 5 failureThreshold: 3 readinessProbe: httpGet: path: /ready port: http initialDelaySeconds: 10 periodSeconds: 5 timeoutSeconds: 3 failureThreshold: 3

Save and close the file.

Convert CPU values from ECS units to Kubernetes millicores. ECS uses 1024 units per vCPU, while Kubernetes uses 1000 millicores per CPU.

Kubernetes CPU (millicores) = (ECS CPU units / 1024) × 1000 Examples: * 256 ECS units = (256 / 1024) × 1000 = 250m * 512 ECS units = (512 / 1024) × 1000 = 500m * 1024 ECS units = (1024 / 1024) × 1000 = 1000m (1 vCPU)Convert memory values from MiB to Kubernetes memory format.

ECS: 512 (MiB) → Kubernetes: 512Mi ECS: 1024 (MiB) → Kubernetes: 1Gi ECS: 2048 (MiB) → Kubernetes: 2Gi

Migrate Secrets and Environment Variables

ECS stores secrets in AWS Secrets Manager or Systems Manager Parameter Store. Kubernetes uses native Secret and ConfigMap resources for sensitive and non-sensitive configuration data respectively.

List secrets referenced in your ECS task definition.

console$ cat task-definition.json | jq '.taskDefinition.containerDefinitions[].secrets'

The output displays secret ARNs from AWS Secrets Manager or Parameter Store.

Retrieve secret values from AWS Secrets Manager. Replace

SECRET-NAMEwith your secret identifier.console$ aws secretsmanager get-secret-value --secret-id SECRET-NAME --query SecretString --output text |jq .

Create a Kubernetes Secret for sensitive application data.

console$ kubectl create secret generic my-app-secrets \ --from-literal=JWT_SECRET='YOUR_JWT_SECRET' \ --from-literal=API_KEY='YOUR_API_KEY'

Replace the keys and values with your actual secrets retrieved from AWS, and repeat this step for each secret you need to create.

Create a ConfigMap for non-sensitive configuration.

console$ nano my-app-config.yaml

Add the ConfigMap configuration.

yamlapiVersion: v1 kind: ConfigMap metadata: name: my-app-config data: APP_ENV: "production" LOG_LEVEL: "INFO" API_ENDPOINT: "https://api.example.com" MAX_CONNECTIONS: "100"

Save and close the file.

Apply the ConfigMap to your cluster.

console$ kubectl apply -f my-app-config.yaml

Update the Deployment manifest to reference the Secret and ConfigMap.

console$ nano my-app-deployment.yaml

Replace the

envsection withenvFromreferences to the ConfigMap and Secret.yamlenvFrom: - configMapRef: name: my-app-config - secretRef: name: my-app-secrets

This configuration injects all key-value pairs from the referenced ConfigMap and Secret as environment variables inside the container.

Save and close the file.

Replicate ECS Service Discovery with Kubernetes Services

ECS uses AWS Cloud Map for service discovery, allowing services to communicate using DNS names. Kubernetes provides built-in service discovery through Service resources backed by CoreDNS, which automatically create stable DNS records and virtual IPs for accessing pods.

Create a Service manifest for internal communication.

console$ nano my-app-service.yaml

Add the Service configuration for the

ClusterIPtype, which exposes the application only inside the cluster.yamlapiVersion: v1 kind: Service metadata: name: my-app labels: app: my-app spec: type: ClusterIP ports: - name: http port: 80 targetPort: 80 protocol: TCP selector: app: my-app

In this configuration:

portis the Service port. Other pods use this port when connecting to the service.targetPortis the container port exposed by the application inside each pod.- Kubernetes forwards traffic from the Service port to the container port on matching pods.

- The

selectormatches pods labeledapp: my-app, dynamically updating endpoints as pods scale or restart.

Save and close the file.

Apply the Service manifest.

console$ kubectl apply -f my-app-service.yaml

Verify that the Service is created and has assigned a cluster IP address.

console$ kubectl get service my-app

The output displays the Service details, including the

CLUSTER-IP, which acts as a stable virtual IP for the application.

my-app.my-app.svc.cluster.local (following the pattern <service-name>.<namespace>.svc.cluster.local) or the short name my-app when accessing from within the same namespace. Kubernetes automatically maintains this DNS record as pods are created or destroyed.

Configure Load Balancing with Gateway API

ECS integrates with Application Load Balancers (ALB) and Network Load Balancers (NLB) for external traffic routing. VKE uses the Kubernetes Gateway API with Envoy Gateway for advanced traffic management, providing equivalent functionality to ALB with path-based and host-based routing, along with automated TLS certificate provisioning.

Before creating the Gateway resource for your application, install the required components by following these sections from the Gateway API with TLS Encryption guide:

Create the Gateway Resource

Deploy the Gateway resource to provision a Vultr Load Balancer and assign a public IP address.

Create the Gateway manifest file.

console$ nano app-gateway.yaml

Add the following configuration. Replace

myapp.example.comwith your actual domain name.yamlapiVersion: gateway.networking.k8s.io/v1 kind: Gateway metadata: name: app-gateway annotations: cert-manager.io/cluster-issuer: letsencrypt-prod spec: gatewayClassName: eg listeners: - name: http protocol: HTTP port: 80 hostname: "myapp.example.com" allowedRoutes: namespaces: from: Same - name: https protocol: HTTPS port: 443 hostname: "myapp.example.com" tls: mode: Terminate certificateRefs: - kind: Secret name: app-tls-secret allowedRoutes: namespaces: from: Same

Save and close the file.

Apply the Gateway manifest.

console$ kubectl apply -f app-gateway.yaml

Retrieve the Gateway external IP address.

console$ kubectl get gateway app-gateway

The output displays the Gateway status and assigned IP address. Note this IP address for DNS configuration.

Update your domain's DNS A record to point to the assigned IP address.

Configure HTTPRoute for Application Routing

Create an HTTPRoute to define routing rules from the Gateway to your backend service.

Create the HTTPRoute manifest file.

console$ nano app-route.yaml

Add the following configuration. Replace

myapp.example.comwith your domain name.yamlapiVersion: gateway.networking.k8s.io/v1 kind: HTTPRoute metadata: name: app-route spec: parentRefs: - name: app-gateway sectionName: https hostnames: - "myapp.example.com" rules: - matches: - path: type: PathPrefix value: / backendRefs: - name: my-app port: 80

Save and close the file.

For path-based routing to multiple services, add additional rules:

yamlrules: - matches: - path: type: PathPrefix value: /api backendRefs: - name: my-api port: 8080 - matches: - path: type: PathPrefix value: / backendRefs: - name: my-app port: 80

Apply the HTTPRoute manifest.

console$ kubectl apply -f app-route.yaml

Verify the TLS certificate status.

console$ kubectl get certificate

The certificate may take a few minutes to provision. Wait until the

READYcolumn showsTrue.

Verify the Migration

After applying all Kubernetes manifests, verify that your application functions correctly on VKE.

Monitor the deployment progress.

console$ kubectl get pods -l app=my-app --watch

The command displays real-time pod status updates. Wait until all pods show

STATUSasRunningandREADYshows the expected ratio (e.g.,1/1). Press Ctrl + C to exit watch mode.Check the pod logs to verify the application started correctly.

console$ kubectl logs -l app=my-app --tail=50

Verify that the Service is routing traffic to the pods.

console$ kubectl get endpoints my-app

The output displays the pod IP addresses and ports that the Service routes traffic to. The number of endpoints should match your replica count.

Test internal service connectivity from within the cluster.

console$ kubectl run test-pod --image=curlimages/curl:latest --rm -it --restart=Never -- curl http://my-app

This command creates a temporary pod that sends an HTTP request to your service. The output displays the HTTP response, confirming internal networking functions correctly.

Verify the Gateway external IP address.

console$ kubectl get gateway app-gateway

Note the IP address assigned to the Gateway.

Test external connectivity through the Gateway using your domain name.

console$ curl https://myapp.example.com

Replace

myapp.example.comwith your actual domain. The output displays your application's HTTP response, confirming external access works correctly with TLS encryption.Monitor HPA behavior under load.

console$ kubectl get hpa my-app-hpa --watch

The HPA displays current CPU and memory utilization alongside current and desired replica counts. The autoscaler adjusts replicas automatically based on the metrics.

Cut Over Traffic from ECS to VKE

After validating that the application is running correctly on Vultr Kubernetes Engine (VKE), perform a controlled cut over to move production traffic from Amazon ECS to the Kubernetes-based deployment.

- Ensure all pods are running, healthy, and passing readiness checks.

- Update your DNS records or traffic manager to point to the Vultr Load Balancer IP address serving the application on VKE.

- Monitor application logs, metrics, and error rates during the transition.

- Keep ECS services running temporarily to allow a quick rollback if required.

After traffic is fully served by VKE and the application is stable, scale ECS services to zero and keep the existing ECS resources available for rollback if needed.

ECS provides Service Auto Scaling to adjust the number of running tasks based on metrics. Kubernetes offers equivalent functionality through the Horizontal Pod Autoscaler (HPA).

Install Metrics Server

The Metrics Server collects resource metrics from Kubelets and provides them to the HPA for scaling decisions.

Install the Metrics Server using the official manifest.

console$ kubectl apply -f https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.8.1/components.yaml

Verify that the Metrics Server is running.

console$ kubectl get deployment metrics-server -n kube-system

Wait for the Metrics Server to be available.

console$ kubectl wait --for=condition=Available deployment/metrics-server -n kube-system --timeout=120s

Configure Horizontal Pod Autoscaler

Ensure your Deployment specifies resource requests. The HPA requires these values to calculate scaling decisions.

console$ nano my-app-deployment.yaml

Verify that the containers section includes resource requests.

yamlresources: requests: cpu: "250m" memory: "512Mi" limits: cpu: "500m" memory: "1Gi"

Save and close the file if you made changes.

Apply the updated Deployment.

console$ kubectl apply -f my-app-deployment.yaml

Create a HorizontalPodAutoscaler manifest.

console$ nano my-app-hpa.yaml

Add the HPA configuration with target metrics.

yamlapiVersion: autoscaling/v2 kind: HorizontalPodAutoscaler metadata: name: my-app-hpa spec: scaleTargetRef: apiVersion: apps/v1 kind: Deployment name: my-app minReplicas: 2 maxReplicas: 10 metrics: - type: Resource resource: name: cpu target: type: Utilization averageUtilization: 70 - type: Resource resource: name: memory target: type: Utilization averageUtilization: 80

Save and close the file.

This HPA configuration maintains CPU utilization at 70% and memory utilization at 80% by automatically adjusting the number of pod replicas between 2 and 10.

Apply the HPA manifest.

console$ kubectl apply -f my-app-hpa.yaml

Verify that the HPA is active and monitoring metrics.

console$ kubectl get hpa my-app-hpa

The output displays current metrics, target metrics, and replica counts. The HPA evaluates metrics every 15 seconds and adjusts replicas as needed.

Update CI/CD Pipelines

Update your continuous integration and deployment pipelines to deploy workloads to Vultr Kubernetes Engine (VKE) instead of Amazon ECS. This change replaces ECS service updates and task definition revisions with Kubernetes-native deployment workflows.

Replace the ECS service update command with a Kubernetes rollout.

console# Old ECS deployment # aws ecs update-service --cluster my-cluster --service my-service --force-new-deployment # New Kubernetes deployment $ kubectl set image deployment/my-app my-app=your-registry/my-app:${BUILD_TAG} $ kubectl rollout status deployment/my-app

The

kubectl set imagecommand updates the container image for the Deployment, whilekubectl rollout statusmonitors the rolling update until all pods are running the new version.Configure access to the VKE cluster in your CI/CD environment.

- Add the VKE kubeconfig file to your CI/CD environment and set the

KUBECONFIGenvironment variable. - Verify that the active Kubernetes context points to the target VKE namespace before running deployment commands.

- Add the VKE kubeconfig file to your CI/CD environment and set the

Related Resources (Optional)

Depending on your application requirements, you may also reference the following topics, which are intentionally out of scope for this walkthrough:

Container Images: Migrating container images from AWS ECR to Vultr Container Registry. Refer to the Vultr Container Registry migration guide.

Persistent Storage: Configuring PersistentVolumeClaims using Vultr Block Storage or Vultr File System. See the VKE PVC guide.

Databases: Migrating database workloads from AWS RDS to Vultr Managed Databases. Refer to the MySQL migration guide or the PostgreSQL migration guide.

Conclusion

You have successfully migrated containerized workloads from Amazon ECS to Vultr Kubernetes Engine by converting ECS task definitions into Kubernetes Deployments, replicating service discovery and load balancing, migrating configuration and secrets, and updating CI/CD pipelines. VKE provides a fully managed, standards-based Kubernetes platform that reduces vendor lock-in while offering flexible networking and production-ready orchestration. For advanced configurations and operational best practices, refer to the official Vultr Kubernetes Engine documentation.